How can pedagogical leaders structure the evaluation process to ensure it is meaningful and impacts on student learning?

I thought a lot about this question throughout the PYP evaluation process. Having just finished a lengthy CIS accreditation process, the school now needed to prepare itself for another evaluative phase. This meant staff had just spent time in groups, gathering evidence and writing summaries of findings. Another process using the same structures would have likely disengaged them, making evaluation an administrative task instead of an opportunity for school-based inquiry. In order to be meaningful, the PYP evaluation process needed to prove that it is far more than an exercise in coordination. Which it is. As John MacBeath writes, evaluation provides schools with the opportunity to improve teaching and learning, refine strategic planning and structure internal professional development opportunities for staff.

However, this opportunity is only as good as what we make of it. Think about the classroom experience: an ineffective learning engagement often turns off students no matter how engaging the content is. The task done through a modality that clashes with a child’s learning style ends in frustration. The discussion that values some students’ opinions over others creates a sense of ownership in some but not all students. So in the evaluation, just like in the classroom, our ability to construct meaningful experiences determines how successful we are in achieving our aims with members of the school community. Pedagogical leaders must be responsive and act flexibly toward the realities of the school context in order to turn the evaluation process into positive change.

So how did we attempt to remain agile during evaluation? A few key understandings guided our approach:

1. The PYP evaluation provides the opportunity to develop understanding of “inquiry as a stance” across the school.

Early on in our evaluation before we formed our inquiry groups, staff had the chance to tune in to the standards and develop descriptors for assessing the practices. Yet something was still missing: that emotional pull that encourages questioning and exploration in the inquiry process. We needed a provocation. To reiterate that the evaluation is about inquiring into student learning, I interviewed and videoed students using one question: “What makes a good learner at our school?” The question, based on John Hattie’s work on Visible Learning (2008), allowed us to capture the diversity of student thought around learning dispositions. Would they express what we hoped they would? Was there a clear picture across the school? What trends were there in student responses?

The video interviews did indeed document varying ideas about what makes a good learner. Some students stated that it meant “listening to the teacher” or “being quiet”. Others described the importance of being a thinker, inquirer or someone “hungry to learn”. Here is a snippet from our “What makes a good learner?” interviews: video

The student responses sparked teachers’ interest and desire to find out more. In this way, the evaluation modeled the inquiry process in that we started with a question hanging over our heads: how are we really doing? The evaluation allowed us to question, experiment with possibilities, make and test theories and defend positions just as we do with students during a unit of inquiry. Focusing on the notion of “inquiry as a stance” throughout the evaluation process stressed that it’s about the process and not about the product.

2. Catering for different working styles amongst staff increases likelihood of a meaningful PYP evaluation process.

Some teachers construct meaning through writing. However, not all do. For this reason, asking inquiry groups to write summaries of findings would not ensure that all teachers felt personal ownership over the evaluation process. To remedy this, I thought about Colin Robson’s claim that what is important is “the usefulness of the data for the purposes of the evaluation, and not the method from which it is obtained”. If we wanted to create opportunities for deep evidence-based discussion in order to improve student learning, we needed to use methods that supported conversation. This meant doing away with a regimented report format, which is linear in form, allowing instead for iterative discussion with use of audio files. Each group recorded their conversations using a handheld device, noting evidence that was found to support their argument for a particular rating. For visual thinkers, using the interactive whiteboard to reference files while speaking meant that discussion linked to concrete examples of practice. Here is an example of the type of discussion we captured through audio recording. A group discusses Standard A6, “The school promotes open communication based on understanding and respect.” Audio: A group discusses A6

It seemed only natural that the “documentation” of these conversations would increase the quality of our self-study and lead to more meaningful action points for our strategic plan. A second part of the audio recording component was for groups to verbally summarize their findings to provide next steps for school development. These were then collated to create a list of action points that informed our final action plan. Here the same group as above discusses one area for development that has come out of their process: Audio: Summary and Action Points

3. A school’s approach to PYP evaluation determines whether it is perceived as an “add on” or as integrated into the fabric of teaching and learning.

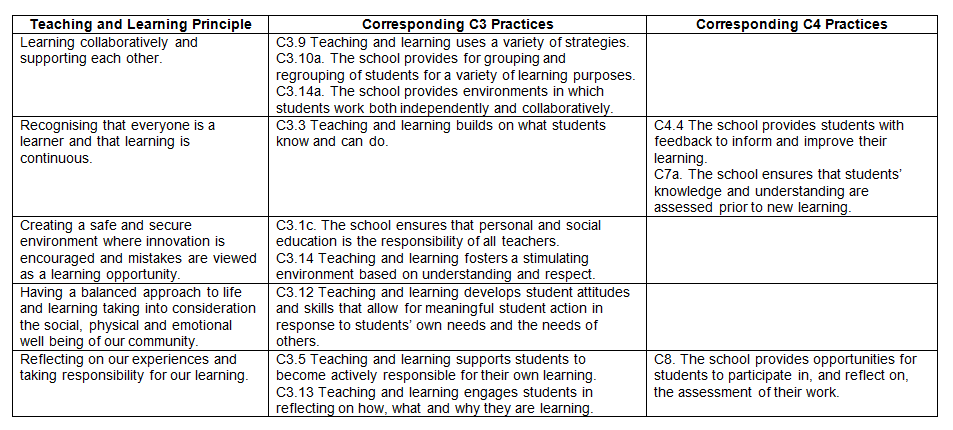

Another consideration while structuring our evaluation was ensuring that the process connected to what we already do as a school. Although a significant amount of time was dedicated to evaluation, we did not want it to be perceived as an ‘“add on” to our routine. So to make connections between the PYP standards and practices and our mission statement, I aligned C3 (Teaching and Learning) and C4 (Assessment) practices to our Teaching and Learning Principles. Our Teaching and Learning Principles define the school’s core pedagogical values and “unpack” what the mission statement looks like in the classroom.

As we strive to embed these in our classroom practice, assessing our implementation of C3/C4 practices with the Teaching and Learning Principles brought greater relevancy to the evaluation process and addressed our unique school context.

To make connections to teaching and learning even more evident, C3 and C4 practices were assessed using classroom observations and student interviews. In a series of Learning Walks, groups of teachers released for a day gathered evidence around a particular focus. At the end of each day, reflections and possible action points were collated into a shared Google doc. Here is an example of how the Teaching and Learning Principles were matched up with C3 and C4 practices for use in our Learning Walks.

4. Creating structures that gather multiple perspectives helps schools ensure that the PYP self-study process does not reflect a singular voice.

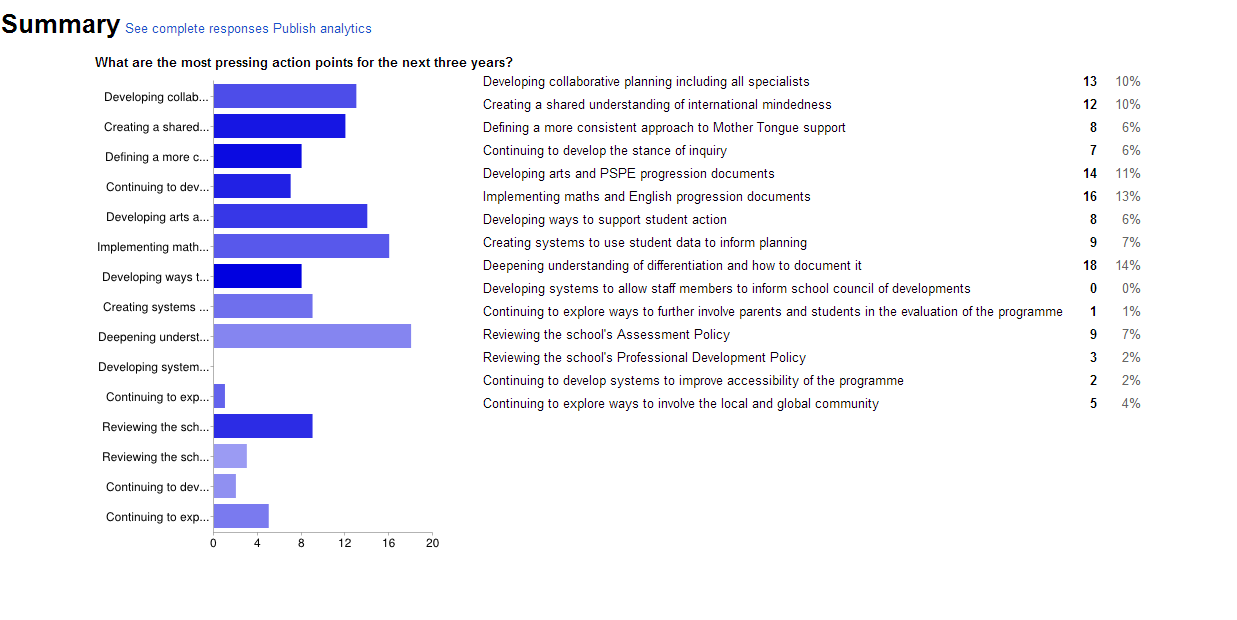

Lastly, when developing structures for our evaluation it was important to ensure that everyone’s voice was present. Gustave Flaubert claims, “There is no truth, only perception.” In the same way, we are more likely to find a collective “truth” about our strengths and areas of development as a school if we look for trends across multiple perspectives. We did this, for instance, in the development of our action plan. As we all know, each school year comes and we seem to be busier than ever before. Streamlining our action plan and being clear on school priorities ensures that we will be more successful in the implementation process. So to uncover which action points were most pressing for teachers, I used a survey.

These results were then cross-referenced with data from parent focus groups done earlier in the year. In this way, the evaluation action plan reflects a multiple perspectives approach and is owned by the entire school community.

References:

MacBeath J. (1999). Schools Must Speak for Themselves: The Case for School Self-Evaluation. London: Routledge.

Hattie, J. (2008). Visible Learning: A Synthesis of Over 800 Meta-Analyses Relating to Achievement. London: Routledge.

Robson, C. (1993). Real-World Research: A Resource for Social Scientists and Practitioner–Researchers. Malden: Blackwell Publishing.

—

Carla Marschall previously worked in Hong Kong at Quarry Bay School (English Schools Foundation) as well as in Germany as PYP coordinator. Carla is a PYP workshop leader and field representative and a Lynn Erickson concept-based curriculum and instruction trainer. She is especially interested in the role of the curriculum to help students develop critical and creative thinking. Carla is currently involved in curriculum development for language in the PYP. She tweets as @carlamarschall.

As I prepare for a combined CIS/PYP visit (and the school for a CIS/IB) I found your specific ideas extremely useful. The idea of focusing on conversation (not report writing) and connecting to what is meaningful already in the school rang true with how I have facilitated self-studies in the past. Your specific examples using audio recordings, interviews and other specific ideas offer me inspiration and renewed commitment to make the process worth the effort and time so that it can lead to mearningful reflection and action. Thank you.

Having been through this CIS and PYP process with Carla in Hong Kong, the process was made easier, because of the specific nature of what we looked at. The process where teachers used audio files to report on findings made it more real and allowed us to bounce ideas off each other. Thanks Carla for your help here and wish you the best of luck in Zurich.

Hi Lisa – Thank you for your comments. I agree that it can be challenging to keep the process fresh when at the end of the day schools have to present a report to a visiting team. Nonetheless I think creative approaches helps us mediate this and remind ourselves of the purpose of evaluation: to improve student learning. I am sure your CIS/PYP visit will be a successful one. Keep in touch about your progress.

Hi Rick – So nice to read your response. I’m glad you look back fondly on the process and feel it was meaningful for determining the school’s next steps. Good luck with your preparations and let me know how things work out.

Hi Carla,

We are about to embark on our 12-month self-study next year. Your ideas are great and have given me a lot to think about in how we manage our Evaluation self-study. I agree that if we make the Evaluation process part of our routine and not an ‘add on’ then staff will be more motivated to engage and gather evidence. It should threfore be as affirmative as it is evaluative. Thank you for sharing you ideas.

Kind regards,

Mary

Hi Mary – Exactly! When teachers did their Learning Walks to assess C3 and C4 practices they were not assessing practices in an evaluative way but in an appreciative way. They only looked for evidence of the practice and not for evidence that we weren’t “doing” the practice well enough. This made teachers much more willing to have others come in and observe classroom practice on a regular basis. We then looked at where we had lots of evidence and pegged them as areas where the school was more developed. Obviously a school can’t work on everything at once so this just meant that other areas of school development needed to be prioritized in the next action plan. Thanks for the feedback!

Hello, I really enjoyed your approach to the evaluation process and the focus on improving student learning. Thank you. I have been reading lots of different blogs about self studies as we are just beginning the process. I am in the position of being the PYP coordinator and I have never gone through the self study process before, which makes me very nervous. I have noticed that many blogs talk of inquiry groups looking at different standards and I wondered how these groups were determined.

A little background, I am in a small school that only has one class per grade so I have a total of 8 homeroom teachers (JK3-grade 5), 7 specialist teachers ( art and PE specialists for JK3 and JK4 and in kindergarten to grade 5- French, 2 X PE, Library and music) and one teaching assistant. This is a total of 16 educators and me. What would be a good way of dividing things up? Any ideas would be greatly appreciated.

Hi Wendy – Thanks for your comments. I think the self-study process definitely has a different set of challenges when doing it within a small school instead of a large school. Instead of having 30, 40 (or more!) teachers to put in groups and delegate standards to, in a small school you have to ensure you are not overloading your staff. I don’t know your school context in depth (so can only provide broad suggestions), but my recommendation would be to brainstorm ways that teachers can work in groups without having a myriad practices to address. For example you could think about putting teachers into groups of three for each of the standards and then delegating practices across six groups. This means each group only has 2-4 practices (which is more manageable) and provides for depth of inquiry instead of a superficial “reading” of the school. Then discussions can be had in larger groups (for example three trio groups) about their practices to provide some critical questioning. All the best with structuring this. I am sure it will be a meaningful process for your school community!

Hi Carla!

Thank you for this insightful post. We will soon be beginning our self study process and this being my first experience with evaluation, I am excited as well as a bit nervous. I have a few questions specific to evaluation process and would like you to guide me through this. Will it be possible to connect through email?

Best,

Vandana

Hi Carla,

Reading this post has been really helpful. We are about yo begin our self study process and just like what Wendy said, ours is a very small school with few members. Most of our staff this year are new not just to PYP, but to teaching as well. I am a bit nervous about the whole process since there is a lot of hand holding that is required in various areas. In such a situation what do you suggest is the best practice to follow? Also could you share as to when did you fill the self study questionnaire? Early into the process of self study or towards the later part?

Hi Vandana,

I am happy to help where I can. If you want to give me your e-mail address I can contact you. Alternatively you can send me a private message via Twitter. I am @carlamarschall.

Hi Srilakshmi,

Thanks for your feedback. It sounds like you have an interesting opportunity at your school! Since you have teachers both new to teaching as well as a the PYP, I would suggest doing a lot of work at the beginning of the self study process around what the practices would look like (for example, by co-constructing checklists, indicators, etc.) so you would know that those practices are well implemented. When in doubt, go back to the PYP documentation and pull out an appropriate paragraph or two to reflect on during a meeting that connects to practices you are investigating at that time.

I would view the self study as a professional development opportunity for your staff and perhaps speak to the rest of your leadership about devolving PD funds to allocate to the self study. Use this money to release teachers to see the kids and their colleagues in action! I suppose at the heart of this is also having a safe and secure environment where people can take risks and learn from each other. If you feel this might not be strong yet, I would put some efforts toward it too.

We filled in the self study questionnaire towards the end as we needed to have evidence to back up our judgments and opinions. If you are the one who will be filling in the questionnaire, I would put in some dates into your calendar now when you can have some uninterrupted time (ideally a few days!) to complete it thoughtfully.

I hope this helps you somewhat. Good luck on your work!

Carla

Hello Carla..i am taking up the role of CIs accreditation coordinator. Kindly forward some useful tips for a preliminary visit.i will be grateful.

EMAIL jawahug@yahoo.com

Great example of inquiry based engagement with the faculty and an amazing example about how to use datas and match those with the standard and practices.

This post offers a perspective on how a structured framework, as the ib is, can be managed creatively and meaningfully when all the school community is involved.

I really appreciated the step TEACHING PRINCIPLES CORRESPONDING STANDARD AND PRACTICES, it is a powerful tool of ongoing professional development among the different range of expertise among the Faculty and an ongoing tools for reflection and implementation.

What changes happened after all this process?

Grazie e complimenti per l’ottimo lavoro.

LIKED

Hi Carla ,

I really like your concrete examples and your idea of viewing the evaluation as an inquiry into students learning. I have a further inquiry that what did you do after making connections for principal and practice through the learning walks. Did you group the teachers? How did you group them to engage in the implementation improvement?

Kindly,

Yao